In early 2026, most discussions around AI video are still focused on surface-level improvements. People are comparing clip length, visual quality, and how realistic a scene looks.

But this focus misses the bigger picture.

The real shift is not happening in what we see. It is happening in how these systems are built and how they function in real workflows.

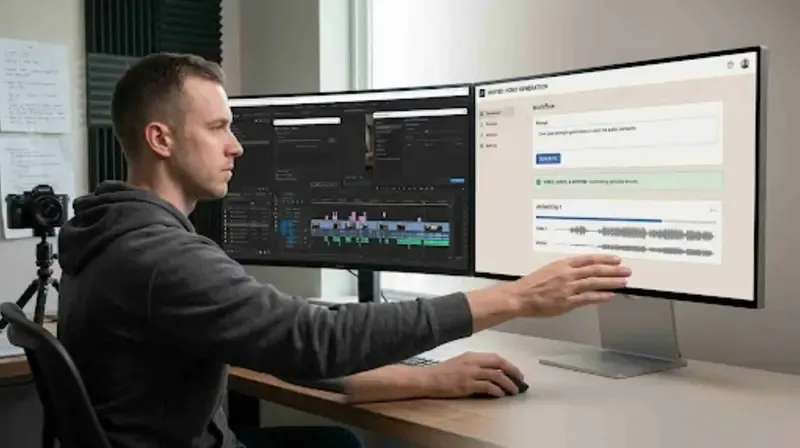

AI video is moving away from fragmented generation toward more structured systems designed for production use. This shift is subtle, but it fundamentally changes how video content is created.

This is where Seedance 2.0, through its integration on the Higgsfield platform, is starting to be used within real production workflows.

Moving Away From Fragmented Video Pipelines

To understand this shift, it helps to look at how AI video was created before.

Most tools followed a layered approach:

- Generate visuals first

- Add audio later

- Apply lip-sync as a separate step

These systems did not truly understand the relationship between different elements. They simply combined outputs after generation.

This often resulted in:

- Slight delays between sound and motion

- Inconsistent character behavior

- Scenes that felt visually correct but physically disconnected

These issues were not just technical limitations. They were a result of the architecture itself.

The Rise of Unified Video Systems

The newer approach treats video as a unified system.

Instead of generating visuals and audio separately, modern workflows process multiple elements together:

- Motion

- Sound

- Scene composition

This creates outputs that are more consistent and easier to use.

Within workflows on the Higgsfield platform, Seedance 2.0 reflects this direction by supporting multi-shot video generation with synchronized audio and consistent characters.

This reduces the need for stitching together multiple outputs.

Why Synchronization Is a Key Differentiator

One of the most noticeable improvements in newer systems is synchronization.

In older workflows:

- Audio is added after video

- Lip-sync is approximated

- Environmental sound often feels generic

Even small mismatches can make content feel less realistic.

In more integrated systems:

- Audio aligns more closely with motion

- Speech matches facial movement more naturally

- Sound reflects the environment of the scene

These improvements make outputs more usable without heavy editing.

Moving Beyond Prompt-Only Workflows

Another shift is happening in how creators interact with AI systems.

For a long time, text prompts were the primary method of control. While they are useful, they are not precise enough for detailed direction.

Creators often need:

- Specific camera movement

- Defined composition

- Controlled tone and pacing

Text alone cannot reliably deliver this.

Within the Higgsfield ecosystem, Seedance 2.0 is used within workflows that support structured inputs such as image references, motion cues, and audio direction.

This reduces trial-and-error and improves predictability.

Solving Character Consistency Challenges

Character inconsistency has been one of the biggest challenges in AI video.

In many tools:

- Characters change slightly between shots

- Lighting and textures vary

- Facial features are not stable

This makes it difficult to build narratives or maintain brand identity.

Newer systems address this by treating characters as persistent elements.

Within workflows on the Higgsfield, this allows creators to maintain consistency across multiple scenes and reduce the need for corrections.

Multi-Shot Video Instead of Isolated Clips

Traditional AI tools often generate single clips.

This creates challenges when trying to build longer sequences.

Seedance 2.0 focuses on generating multi-shot video within a single process.

This enables:

- Smooth transitions between shots

- Consistent lighting and environment

- Continuous narrative flow

Instead of assembling clips manually, the system supports more structured output.

Efficiency Beyond Speed

Speed is often highlighted as a key benefit of AI tools.

However, speed alone does not define efficiency.

If a video requires multiple corrections after generation, the total time spent remains high.

More integrated workflows reduce this problem by producing outputs that are closer to final form.

This allows creators to:

- Reduce post-production effort

- Use fewer tools

- Iterate more quickly

Within the Higgsfield platform, this results in workflows that are better suited for real production use.

Why This Shift Matters for Agencies

As content demand increases, agencies need to produce:

- High-quality visuals

- Consistent branding

- Scalable content

Tools that rely on fragmented workflows may still work for experimentation, but they are difficult to scale.

According to McKinsey on AI in film and TV production, improvements in AI-driven production workflows can significantly reduce production costs and increase efficiency across creative teams.

This highlights why more structured systems are gaining attention.

From Generation to Production

The most important shift is conceptual.

AI video is no longer just about generating clips.

It is about producing usable content.

This changes how creators evaluate tools.

Instead of asking:

“What can this generate?”

They are now asking:

“How usable is the output without additional work?”

Seedance 2.0, through its integration on the Higgsfield platform, reflects this transition toward production-oriented workflows.

Conclusion

The AI video space is evolving, but not all tools are evolving in the same direction.

While many continue to focus on visual improvements, a deeper shift is happening in how these systems are built and used.

The move from fragmented pipelines to unified workflows is changing what AI video can achieve.

As expectations continue to rise, the difference between experimental tools and production-ready systems will become more visible.

Creators and teams that recognize this shift early will not just generate better content. They will build workflows that support real production needs.